Performance benchmarking Socket.io 0.8.7, 0.7.11 and 0.6.17 and Node's native TCP

I've been working with Socket.io quite a bit recently. It's a great library. However, after upgrading to 0.8.x, I ran into problems with increased CPU usage. Since performance is very important for high traffic pubsub implementations, I decided to investigate this further - and try to quantify the performance impact of upgrading to a newer version of Socket.io.

I wrote a benchmarking suite (siobench). The benchmark is rather simple. Clients connect one at a time, and a new client is only allowed to connect when the previous one is connected. When the server has used up 5000 milliseconds of CPU time, the benchmark is stopped. Every second, every connected client sends a single message which is echoed back by the server (more details).

This workload is geared towards a situation where Socket.io is used to notify people of things as part of a larger application: e.g. most of the load is assumed to be idling connections rather than real-time messaging like in, say, a multiplayer game.

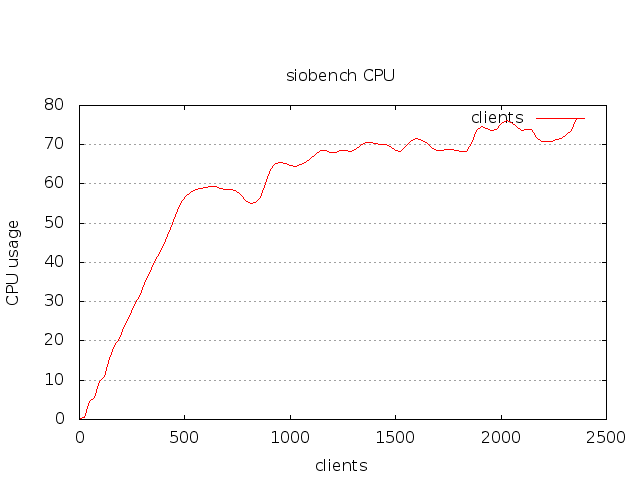

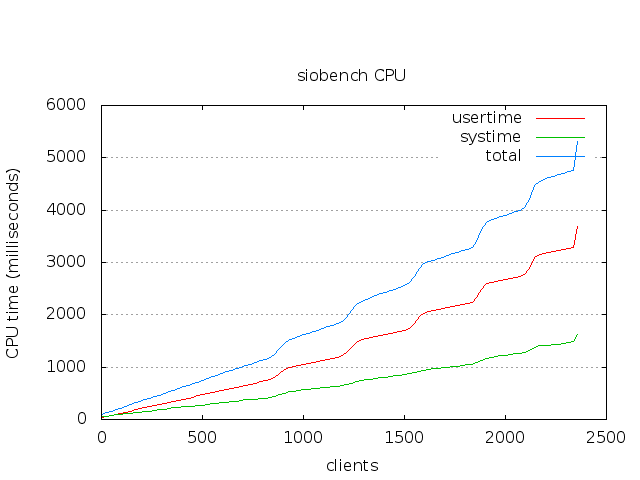

The "end of test" condition is 5000 ms of CPU time, because this seemed to be a easy way to give all implementations the same amount of time. CPU usage % is not accurate, since it is dependent on how much CPU time the process gets over a particular amount of wallclock time. In the graphs the CPU usage % calculated over a 100ms interval, while usertime and systime are the actual numbers reported at that particular time.

Summary

| Node (0.4.12) using tcp | ~ 8000 connections on a single core |

| socket.io 0.6.17 using websockets | ~ 2300 connections on a single core |

| socket.io 0.7.11 using websockets | ~ 1800 connections on a single core |

| socket.io 0.8.6 using websockets | ~ 1900 connections on a single core |

Note that the absolute numbers are mostly unimportant - I ran this on the following 15" Macbook Pro running Arch with the 3.1.04 Linux kernel in Virtualbox with 4096 Mb of RAM, a SSD and four cores (Intel(R) Core(TM) i7-2635QM CPU @ 2.00GHz GenuineIntel GNU/Linux). You can get numbers that are more representative of your system by getting siobench and running it:

Usage: node siobench.js [env]

A tool for benchmarking your Socket.io server.

Available environments:

0.6.17

0.6.17_poll

0.7.11

0.8.7

0.8.7_poll

tcpYou can also write your own benchmarks under ./bench, by writing a new server.js (example #1, #2) and a new client.js (example #1, #2). Each benchmark has it's own set of npm dependencies installed, so that one can run benchmarks against many versions of socket.io.

Some notes on performance

The relative performance is more interesting.

First, the node TCP speed represents the highest achievable performance on this benchmark, since it only uses the built-in TCP implementation. Compared to this, Socket.io is has about 1/3 of the performance (~ 2300 vs ~8000 connections) when using WebSockets.

Second, it appears that 0.8.7 is about 20% slower than 0.6.17 on this benchmark. If I remember correctly, Socket.io 0.7 switched to a new protocol, and there are clearly some performance improvements over 0.7.11 in 0.8.7 (+100 connections in this bench); it's just that the overall performance is still worse in this benchmark than in the old 0.6.17 branch.

Working towards higher-performance

As this is just a simple benchmark, I don't really have solutions - only some suggestions.

1) A CI build that includes benchmarks and community contributed test cases

First, I'd love to see a CI build for Socket.io that would include performance benchmarks and community contributed test cases.

However, currently setting up a CI build for Socket.io is difficult because the bundled test suite only works on OSX. It would be a lot easier to contribute if the tests worked on other platforms.

I am hoping that as Engine.io gets going, the test suite will be fixed so that it can be run on other platforms. Otherwise, contributing improvements will be tricky/impossible since there is no way to tell whether the code works.

2) More realistic performance test scenarios

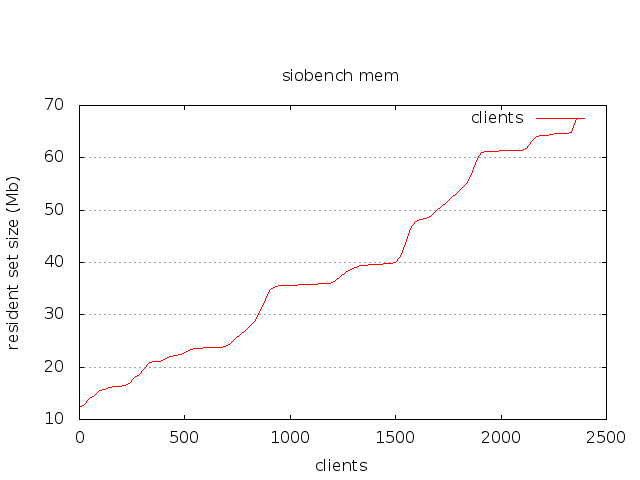

The current test scenario is rather limited in that it mostly tests performance in terms of establishing connections (without terminating them). I'd love to hear more realistic scenario suggestions, particularly from people who have run into memory usage issues.

siobench is only a starting point: it's way better than just looking at htop and wondering whether performance was better in the last version or not. There are still specific questions that should be formulated as replicable tests.

3) A polling transport that works on Node.js

I did write tests for the xhr-polling transport for Socket.io as well. These showed much worse performance, around:

- ~ 550 connections on Socket.io 0.6.17 (vs ~2300 using WS)

- ~ 450 connections on Socket.io 0.8.7 (vs ~ 1900 using WS)

4) Comparative benchmarks

Hopefully, this will help with performance testing new releases of Socket.io and other Comet libraries. Since the plan is that Engine.io will allow people to work with a lower level than Socket.io, there might be new performance oriented versions, and it would be useful to see benchmarks for those. Re: the other Node.js pubsub frameworks: I can't benchmark Faye, because it does not provide the right API out of the box, and Juggernaut uses Socket.io internally.

I'm going to use siobench it for internal testing to ensure that the pubsub implementation I am working on (built over Socket.io) will not have performance regressions.

The full graphs are below. Please leave comments and suggestions for improvements - I am hoping that the developer community around Socket.io can help in improving the performance going forward, kind of like what Mozilla did with "arewefastyet.com".

Socket.io 0.6.17 - Websockets - CPU usage and time

Socket.io 0.6.17 - Websockets - resident set size

Comments

Xananax: Hey Mixu. My comment is totally unrelated to your post (which has been useful to me by the way). You don't know me, but I know you a little: I have been following your blog for a while now. But I never had a compelling reason to write something. So even if it messes up a bit with the rigor of coder's comment that should always be constructive, I wanted to express my thanks as your writings have been an invaluable source of information and inspiration to me. Always interesting, always well written, always well presented. Keep up the good work! Wish you the best for this new year.

Mikito Takada: Thanks! This comment made my day. 2012 will be a great year!

Karen: You can check with this: https://github.com/sockjs/sockjs-node ?

Luc: Hi,

I've tried to fetch your project on github and when I do "npm install" I've got an error fetching dependencies :

npm ERR! git clone [email protected]:mixu/nodeunit-runner.git Warning: Permanently added 'github.com' (RSA) to the list of known hosts.

npm ERR! git clone [email protected]:mixu/nodeunit-runner.git Permission denied (publickey).

npm ERR! git clone [email protected]:mixu/nodeunit-runner.git fatal: The remote end hung up unexpectedly

npm ERR! Error: git "clone" "[email protected]:mixu/nodeunit-runner.git" "/tmp/npm-1330952664150/1330952664173-0.6050085364840925" failed with 128

npm ERR! at ChildProcess. (/usr/lib/nodejs/npm/lib/utils/exec.js:49:20)

npm ERR! at ChildProcess.emit (events.js:70:17)

npm ERR! at maybeExit (child_process.js:361:16)

npm ERR! at Socket. (child_process.js:466:7)

npm ERR! at Socket.emit (events.js:67:17)

npm ERR! at Array.0 (net.js:320:10)

npm ERR! at EventEmitter._tickCallback (node.js:192:40)

Do you have any ideas ?

rufinus: just change the github rep url to git://github.com/sockjs/sockjs-node.git in the config file for the npm he used his personal read/write url.

thanks for this article, its exactly what we have seen and couldnt believe it.